Essential Skills For System Management

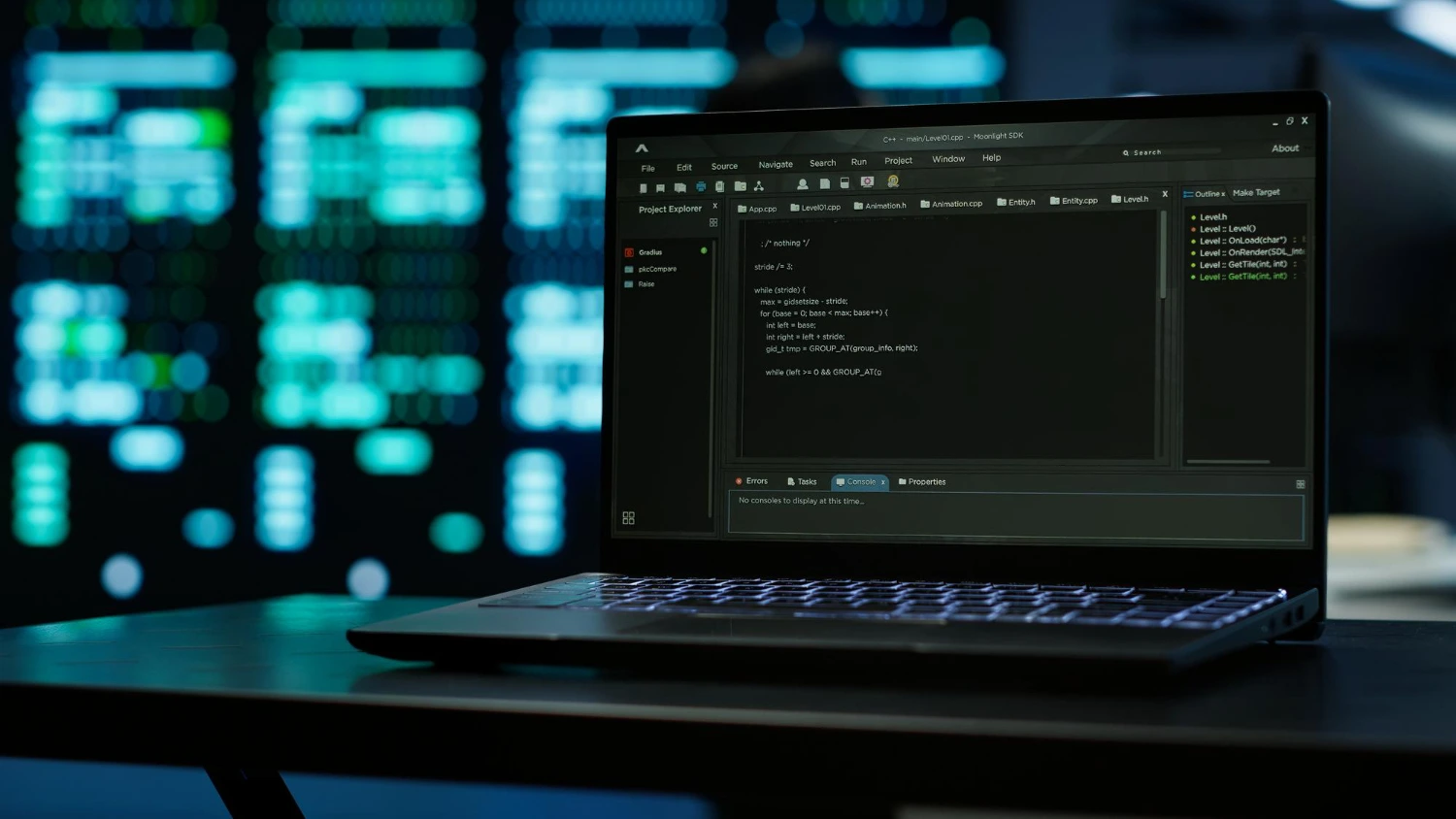

Every system operator needs a solid grasp of the command line interface to troubleshoot issues quickly. You configure network settings, manage file permissions, and monitor system resources entirely through terminal inputs. Relying on graphical interfaces slows down your workflow during critical system failures.

Writing shell scripts automates repetitive tasks and saves you hours of manual work. You can schedule backups, update packages, and monitor server health using simple automation scripts. Learning bash scripting transforms you from a basic user into a highly efficient operator.